Yang Yu1, Zheng Yang1,2, Shanshan Lin1, and Yue Zhang1,2

1School of information and engineering, Shenyang University of Technology, Shenyang 110023, China

2College of Information, Shenyang Institute of Engineering, Shenyang 110136, China

Received: August 3, 2025

Accepted: September 18, 2025

Publication Date: March 15, 2026

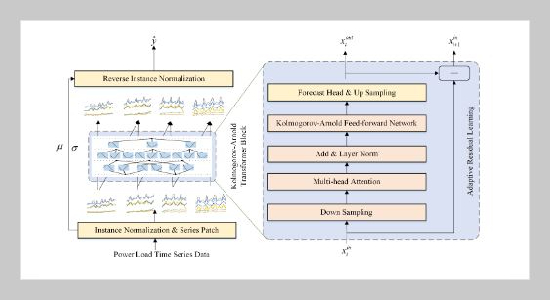

The overall framework of KANfomer, containing time series patch partitioning, Kolmogorov-Arnold transformer block, adaptive residual learning.

Copyright The Author(s). This is an open access article distributed under the terms of the Creative Commons Attribution License (CC BY 4.0), which permits unrestricted use, distribution, and reproduction in any medium, provided the original author and source are cited.

Download Citation: BibTeX | https://doi.org/10.6180/jase.202608_31.043

With economic growth and improving living standards, electricity demand becomes more complex and volatile. As a key part of power system planning, operation, and management, power load forecasting is of great importance. Accurate forecasting enables grid dispatching departments to make reasonable generation plans and schedule equipment maintenance in advance. However, there are still exist two issues in current power load forecasting methods: (1) Current methods commonly utilize multilayer perceptrons to construct the overall network which is extremely difficult to interpret how these models arrive at specific predictions. (2) They commonly utilize the one-step generation paradigm with a customized forecasting head. Such a manner ignores the temporal dependencies in the forecasting series and needs to train separately for different prediction lengths. To this end, a novel interpretable Kolmogorov-Arnold networks (KAN)-based Transformer architecture (KANformer)is proposed as the backbone of the model to capture variation patterns of power load time-series data. Specifically, KANformer transforms the forecasting task into a standard language modeling task. It uses patching technology to project time series into patch-based representations. During training, an autoregressive optimization function replaces the traditional single-step generation scheme. This allows the model to effectively model the temporal dependencies with in the prediction range at the patch level through auto regressive inference. It can also seamlessly adapt to various power grid load datasets with different prediction settings without any modifications. Experimental results on two real-world power grid load datasets show that KANformer has superior performance and generalization ability.

Keywords: Time series analysis, power load forecasting, Kolmogorov-Arnold network.

- [1] Q. Ma, Z. Liu, Z. Zheng, Z. Huang, S. Zhu, Z. Yu, and J. T. Kwok, (2024) “A survey on time-series pre-trained models” IEEE Transactions on Knowledge and Data Engineering: DOI: 10.1109/TKDE.2024.3475809.

- [2] Q. Deng, C. Wang, J. Sun, Y. Sun, J. Jiang, H. Lin, and Z. Deng, (2023) “Nonvolatile CMOS memristor, reconfigurable array, and its application in power load forecasting” IEEE Transactions on Industrial Informatics 20(4): 6130–6141. DOI: 10.1109/TII.2023.3341256.

- [3] J. Gao, M. Liu, P. Li, J. Zhang, and Z. Chen, (2024) “Deep Multiview Adaptive Clustering With Semantic Invariance” IEEE Transactions on Neural Networks and Learning Systems 35(9): 12965–12978. DOI: 10.1109/TNNLS.2023.3265699.

- [4] J. Gao, M. Liu, P. Li, A. A. Laghari, A. R. Javed, N. Victor, and T. R. Gadekallu, (2023) “Deep Incomplete Multiview Clustering via Information Bottleneck for Pattern Mining of Data in Extreme-Environment IoT” IEEE Internet of Things Journal 11(16): 26700–26712. DOI: 10.1109/JIOT.2023.3325272.

- [5] Q. Xing, X. Huang, J. Wang, and S. Wang, (2024) “A novel multivariate combined power load forecasting system based on feature selection and multi-objective intelligent optimization” Expert Systems with Applications 244: 122970. DOI: 10.1016/j.eswa.2023.122970.

- [6] G.-F. Fan, Y.-Y. Han, J.-W. Li, L.-L. Peng, Y.-H. Yeh, and W.-C. Hong, (2024) “A hybrid model for deep learning short-term power load forecasting based on feature extraction statistics techniques” Expert Systems with Applications 238: 122012. DOI: 10.1016/j.eswa.2023.122012.

- [7] Z. Zhan, X. Mao, H. Liu, and S. Yu, (2025) “STGL: Self-Supervised Spatio-Temporal Graph Learning for Traffic Forecasting” Journal of Artificial Intelligence Research 2(1): 1–8. DOI: 10.70891/JAIR.2025.040001.

- [8] B. Li, Y. Zhao, S. Zhelun, and L. Sheng. “Danceformer: Music conditioned 3d dance generation with parametric motion transformer”. In: Proceedings of the AAAI Conference on Artificial Intelligence. 36. 2. 2022, 1272–1279. DOI: 10.1609/aaai.v36i2.20014.

- [9] P. Tang and W. Zhang. “Unlocking the Power of Patch: Patch-Based MLP for Long-Term Time Series Forecasting”. In: Proceedings of the AAAI Conference on Artificial Intelligence. 39. 12. 2025, 12640–12648. DOI: 10.1609/aaai.v39i12.33378.

- [10] J. Gao, Q. Cao, and Y. Chen. “Auto-regressive moving diffusion models for time series forecasting”. In: Proceedings of the AAAI Conference on Artificial Intelligence. 39. 16. 2025, 16727–16735. DOI: 10.1609/aaai.v39i16.33838.

- [11] J. Dong, H. Wu, H. Zhang, L. Zhang, J. Wang, and M. Long, (2023) “Simmtm: A simple pre-training framework for masked time-series modeling” Advances in Neural Information Processing Systems 36: 29996–30025.

- [12] T. Zhou, P. Niu, L. Sun, R. Jin, et al., (2023) “One fits all: Power general time series analysis by pretrained lm” Advances in neural information processing systems 36: 43322–43355.

- [13] A. Zeng, M. Chen, L. Zhang, and Q. Xu. “Are transformers effective for time series forecasting?” In: Proceedings of the AAAI conference on artificial intelligence. 37. 9. 2023, 11121–11128. DOI: 10.1609/aaai.v37i9.26317.

- [14] K. Yi, Q. Zhang, W. Fan, S. Wang, P. Wang, H. He, N. An, D. Lian, L. Cao, and Z. Niu, (2023) “Frequency-domain mlps are more effective learners in time series forecasting” Advances in Neural Information Processing Systems 36: 76656–76679.

- [15] A. Das, W. Kong, R. Sen, and Y. Zhou. “A decoder-only foundation model for time-series forecasting”. In: Forty-first International Conference on Machine Learning. 2024.

- [16] Y. Wang, Y. Hao, K. Zhao, and Y. Yao, (2025) “Stochastic configuration networks for short-term power load forecasting” Information Sciences 689: 121489. DOI: 10.1016/J.INS.2024.121489.

- [17] J. Wang, M. Kou, R. Li, Y. Qian, and Z. Li, (2025) “Ultra-short-term wind power forecasting jointly driven by anomaly detection, clustering and graph convolutional recurrent neural networks” Advanced Engineering Informatics 65: 103137. DOI: 10.1016/J.AEI.2025.103137.