- [1] H. Zhou, S. Zhang, J. Peng, S. Zhang, J. Li, H. Xiong, and W. Zhang. “Informer: Beyond efficient trans former for long sequence time-series forecasting”. In: Proceedings of the AAAI conference on artificial intelligence. 35. 2021, 11106–11115. DOI: 10.1609/aaai.v35i12.17325.

- [2] Z. Karevan and J. A. Suykens, (2020) “Transductive LSTM for time-series prediction: An application to weather forecasting" Neural Networks 125: 1–9. DOI: 10.1016/j.neunet.2019.12.030.

- [3] L. Martín, L. F. Zarzalejo, J. Polo, A. Navarro, R. Marchante, and M. Cony, (2010) “Prediction of global solar irradiance based on time series analysis: Application to solar thermal power plants energy production planning" Solar energy 84(10): 1772–1781. DOI: 10.1016/j.solener.2010.07.002.

- [4] X.Yin,G.Wu,J.Wei,Y.Shen,H.Qi,andB.Yin,(2021) “Deeplearning on traffic prediction: Methods, analysis, and future directions" IEEE Transactions on Intelligent Transportation Systems 23(6): 4927–4943. DOI: 10.1109/TITS.2021.3054840.

- [5] A. A. Laghari, V. V. Estrela, and S. Yin, (2024) “How to collect and interpret medical pictures captured in highly challenging environments that range from nanoscale to hyperspectral imaging" Current Medical Imaging 20(1): e281222212228. DOI: 10.2174/1573405619666221228094228.

- [6] A. A. Laghari, Y. Sun, M. Alhussein, K. Aurangzeb, M. S. Anwar, and M. Rashid, (2023) “Deep residual dense network based on bidirectional recurrent neural net work for atrial fibrillation detection" Scientific Reports 13(1): 15109. DOI: 10.1038/s41598-023-40343-x.

- [7] A. A. Laghari, S. Shahid, R. Yadav, S. Karim, A. Khan, H. Li, andY.Shoulin, (2023) “The state of art and review on video streaming" Journal of High Speed Networks 29(3): 211–236. DOI: 10.3233/JHS-222087.

- [8] A. A. Laghari, V. V. Estrela, H. Li, Y. Shoulin, A. A. Khan, M. S. Anwar, A. Wahab, and K. Bouraqia, (2024) “Quality of experience assessment in virtual/augmented reality serious games for healthcare: A systematic literature review" Technology and Disability 36(1-2): 17–28. DOI: 10.3233/TAD-230035.

- [9] S. Yin, H. Li, L. Teng, A. A. Laghari, A. Almadhor, M. Gregus, and G. A. Sampedro, (2024) “Brain CT image classification based on mask RCNN and attention mechanism" Scientific Reports 14(1): 29300. DOI: 10.1038/s41598-024-78566-1.

- [10] M. A. Munir, R. A. Shah, M. Ali, A. A. Laghari, A. Almadhor, and T. R. Gadekallu, (2024) “Enhancing Gene Mutation Prediction with Sparse Regularized Au toencoders in Lung Cancer Radiomics Analysis" IEEE Access: DOI: 10.1109/ACCESS.2024.3523330.

- [11] A. Vaswani, N. Shazeer, N. Parmar, J. Uszkoreit, L. Jones, A. N. Gomez, Ł. Kaiser, and I. Polosukhin. “Attention is all you need”. In: Advances in Neural Information Processing Systems. 30. 2017.

- [12] A. Gu, K. Goel, and C. Ré, (2021) “Efficiently modeling long sequences with structured state spaces" arXiv preprint arXiv:2111.00396: DOI: 10.48550/arXiv.2111.00396.

- [13] J. T. Smith, A. Warrington, and S. W. Linderman, (2022) “Simplified state space layers for sequence modeling" arXiv preprint arXiv:2208.04933: DOI: 10.48550/arXiv.2208.04933.

- [14] A. Gu, K. Goel, A. Gupta, and C. Ré, (2022) “On the Parameterization and Initialization of Diagonal State Space Models" Advances in Neural Information Processing Systems 35: 35971–35983.

- [15] A. Gu, I. Johnson, K. Goel, K. Saab, T. Dao, A. Rudra, and C. Ré, (2021) “Combining recurrent, convolutional, and continuous-time models with linear state space layers" Advances in neural information processing systems 34: 572–585.

- [16] A. Katharopoulos, A. Vyas, N. Pappas, and F. Fleuret. “Transformers are rnns: Fast autoregressive trans formers with linear attention”. In: International conference on machine learning. PMLR. 2020, 5156–5165.

- [17] T. Dao, D. Y. Fu, K. K. Saab, A. W. Thomas, A. Rudra, and C. Ré. “Hungry Hungry Hippos: Towards Lan guage Modeling with State Space Models”. In: Inter national Conference on Learning Representations. 2023. DOI: 10.48550/arXiv.2212.14052.

- [18] J. T. H. Smith, A. Warrington, and S. W. Linderman. “Simplified State Space Layers for Sequence Model ing”. In: International Conference on Learning Representations. 2023. DOI: 10.48550/arXiv.2208.04933.

- [19] A.GuandT.Dao,(2024)“Mamba:Linear-timesequence modeling with selective state spaces" Conference on Language Modeling:

- [20] A. Dosovitskiy, L. Beyer, A. Kolesnikov, D. Weissenborn, X. Zhai, T. Unterthiner, M. Dehghani, M. Minderer, G. Heigold, S. Gelly, et al. “An Image is Worth 16x16 Words: Transformers for Image Recogni tion at Scale”. In: International Conference on Learning Representations. 2020.

- [21] E. S. Gardner Jr, (1985) “Exponential smoothing: The state of the art" Journal of Forecasting 4(1): 1–28. DOI: 10.1002/for.3980040103.

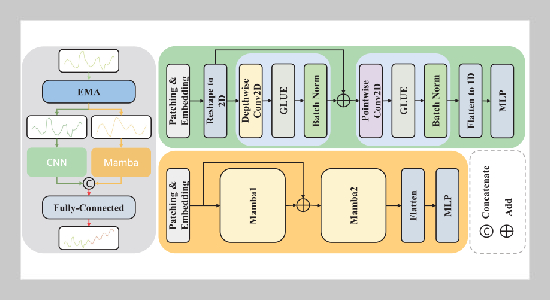

- [22] J. Tao, L. Cao, H. Wang, C. Xie, J. Li, and L. Zhou, (2026) “PaDuM:Patch-Based Dual-Stream Network with CNN and Mambafor Time Series Forecasting" Submit ted to the 2026 IEEE International Conference on Acoustics, Speech, and Signal Processing (ICASSP 2026): DOI: 10.48550/arXiv.2411.05793.

- [23] H.Wu,J.Xu,J.Wang,andM.Long.“Autoformer: Decomposition transformers with auto-correlation for long-term series forecasting”. In: Advances in Neural Information Processing Systems. 34. 2021, 22419–22430.

- [24] T. Zhou, Z. Ma, Q. Wen, X. Wang, L. Sun, and R. Jin. “Fed former: Frequency enhanced decomposed transformer for long-term series forecasting”. In: International Conference on Machine Learning. 162. Proceedings of Machine Learning Research. PMLR. 2022, 27268–27286.

- [25] A. Zeng, M. Chen, L. Zhang, and Q. Xu. “Are trans formers effective for time series forecasting?” In: Proceedings of the AAAI Conference on Artificial Intelligence. 37. 2023, 11121–11128. DOI: 10.1609/aaai.v37i9.26317.

- [26] A. Stitsyuk and J. Choi. “xPatch: Dual-Stream Time Series Forecasting with Exponential Seasonal-Trend Decomposition”. In: Proceedings of the AAAI Conference on Artificial Intelligence. 39. 2025, 20601–20609. DOI: 10.1609/aaai.v39i19.34270.

- [27] Y. Nie, H. N. Nguyen, P. Sinthong, and J. Kalagnanam. “A Time Series is Worth 64 Words: Long-term Forecasting with Transformers”. In: International Conference on Learning Representations. 2023. DOI: 10.48550/arXiv.2211.14730.

- [28] Y. Zhang and J. Yan. “Cross former: Transformer utilizing cross-dimension dependency for multivariate time series forecasting”. In: The Eleventh International Conference on Learning Representations (ICLR). 2022.

- [29] L. Sifre and S. Mallat, (2014) “Rigid-motion scattering for texture classification" International Journal of Computer Vision: DOI: 10.48550/arXiv.1403.1687.

- [30] C. Szegedy, W. Liu, Y. Jia, P. Sermanet, S. Reed, D. Anguelov, D. Erhan, V. Vanhoucke, and A. Rabi novich. “Going deeper with convolutions”. In: Proceedings of the IEEE conference on computer vision and pattern recognition. 2015.

- [31] S. Ioffe and C. Szegedy. “Batch normalization: Accelerating deep network training by reducing internal covariate shift”. In: International conference on machine learning. PMLR. 2015, 448–456.

- [32] A. G. Howard, M. Zhu, B. Chen, D. Kalenichenko, W. Wang,T.Weyand,M.Andreetto,andH.Adam,(2017) “Mobilenets: Efficient convolutional neural networks for mobile vision applications" Computer Vision and Pat tern Recognition: DOI: 10.48550/arXiv.1704.04861.

- [33] F. Chollet. “Xception: Deep learning with depth wise separable convolutions”. In: Proceedings of the IEEE conference on computer vision and pattern recognition. 2017.

- [34] A. Trockman and J. Z. Kolter, (2022) “Patches Are All You Need?" Transactions on Machine Learning Research: DOI: 10.48550/arXiv.2201.09792.

- [35] Z. Wanget al., (2025) “Is mamba effective for time series forecasting?" Neurocomputing 619: 129178. DOI: 10.1016/j.neucom.2024.129178.

- [36] M.A.AhamedandQ.Cheng,(2024) “TimeMachine: A Time Series is Worth 4 Mambas for Long-term Fore casting" ECAI 2024: 27th European Conference on Artificial Intelligence: DOI: 10.3233/faia240677.

- [37] A. Trindade, (2015) “ElectricityLoadDia grams20112014" UCI Machine Learning Repository 10: C58C86.

- [38] G. Lai, W.-C. Chang, Y. Yang, and H. Liu. “Modeling long-and short-term temporal patterns with deep neural networks”. In: The 41st international ACM SI GIR conference on research & development in information retrieval. 2018, 95–104. DOI: 10.1145/3209978.3210006.

- [39] X. Wang, T. Zhou, Q. Wen, J. Gao, B. Ding, and R. Jin. “CARD: Channel Aligned Robust Blend Trans former for Time Series Forecasting”. In: The Twelfth International Conference on Learning Representations. 2024. DOI: 10.48550/arXiv.2305.12095.

- [40] S. Wang, H. Wu, X. Shi, T. Hu, H. Luo, L. Ma, J. Y. Zhang, and J. ZHOU. “Time Mixer: Decomposable Multiscale Mixing for Time Series Forecasting”. In: International Conference on Learning Representations (ICLR). 2024. DOI: 10.48550/arXiv.2405.14616.

- [41] H. Wu, T. Hu, Y. Liu, H. Zhou, J. Wang, and M. Long. “TimesNet:Temporal2D-Variation Modeling for General Time Series Analysis”. In: International Conference on Learning Representations. 2023. DOI: 10.48550/arXiv.2210.02186.